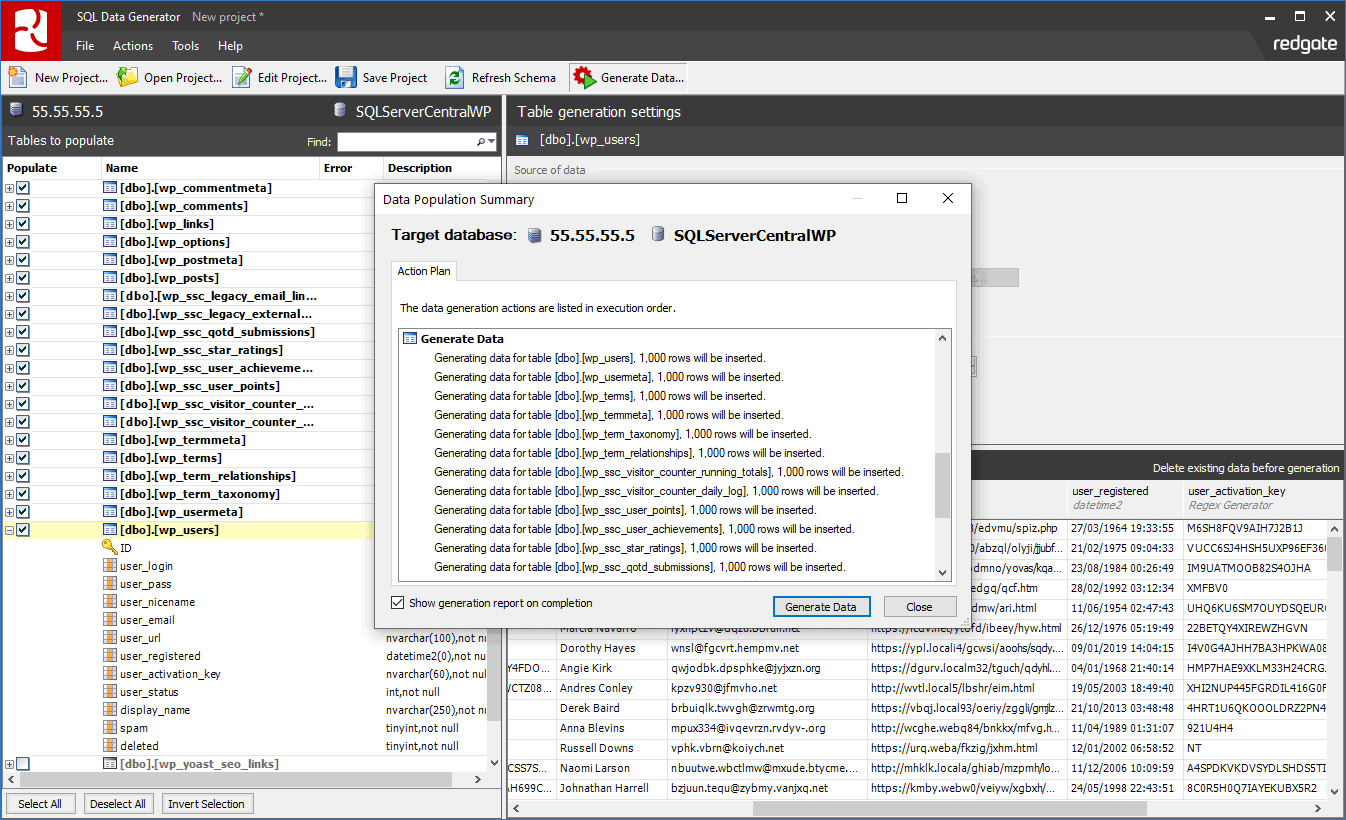

My previous experiences of generating synthetic data is a costly exercise, which largely isn't worth the hassle. The most incredible thing is being able to generate synthetic data that relates to other synthetic data - consistent primary and foreign keys that you will most likely encounter in a production operational system. ("delta").mode("overwrite").save("output path")īut the most incredible thing isn't the ease in which synthetic data is generated.

The generator is currently installed to a cluster as a wheel and enabled in your notebook using:įinally, we use the build command to generate the data into a dataframe which can then be written and used by other processes or users. Mapping the base value to one of a set of discrete values, including weighting You can also iteratively generate data for a column by applying transformations on the base value of the column, such as: In order to generate data, you define a spec (which can imply a schema or use an existing schema) and seed each column using a series of approaches:īased on the value on one or more base fields But once data has been generated, you can then use any of the other languages supported by Databricks to consume it.

It is a Python framework, so you have to be comfortable with Python and PySpark to generate data. It uses the Spark engine to generate the data so is able to generate a huge amount of data in a short amount of time. The Data Generator aims to do just that: generate synthetic data for use in non-production environments while trying to represent realistic data. There’s plenty of use cases that I’ll be using, and extending, with my client but the one I want to focus on in this post is the Data Generator. As a Consultant, this makes my life a lot easier as I don’t have to re-invent the wheel and I can use it to demonstrate value in partnering with Databricks. Databricks Labsĭatabricks Labs is a relatively new offering from Databricks which showcases what their teams have been creating in the field to help their customers.

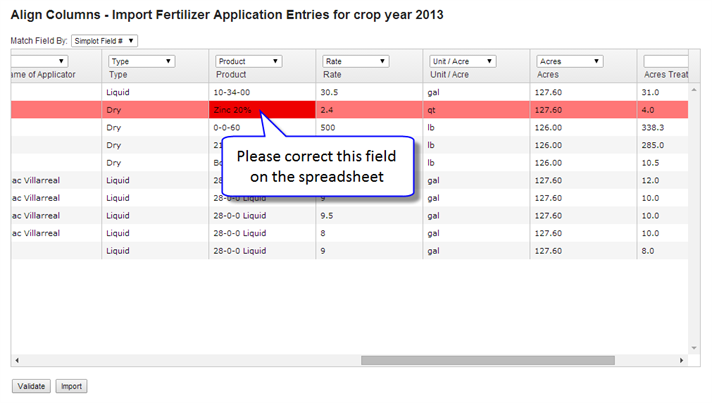

The Product Owner decided at the time that it was too costly to implement any time soon, but this release from Databricks makes the requirement for synthetic data much easier and quicker to realise and deliver. This is particularly exciting as the Information Security manager at a client recently requested synthetic data to be generated for use in all non-production environments as a feature of a platform I’ve been designing for them. Let’s assume we’re testing a method that requires a student who has taken ten courses and achieved either grade A or B in all the courses.Databricks recently released the public preview of a Data Generator for use within Databricks to generate synthetic data. Each test method can utilize the template as the base and apply customizations as needed. With the model defined, we can now use it across all our test methods. generate(field(Phone::getCountr圜ode), gen -> gen.string().prefix("+").digits().maxLength(2)) generate(field(ContactInfo::getEmail), gen -> gen.text().pattern("#a#a#a#a#a# ")) generate(field(Student::getEnrollmentYear), gen -> gen.temporal().year().past()) generate(field(Student::getDateOfBirth), gen -> gen.temporal().localDate().past()) A model can be created by calling the toModel() method, as shown in the following example: Model studentModel = Instancio.of(Student.class) Objects created from a model will have all the model’s properties. An Instancio Model is an object template expressed via the API.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed